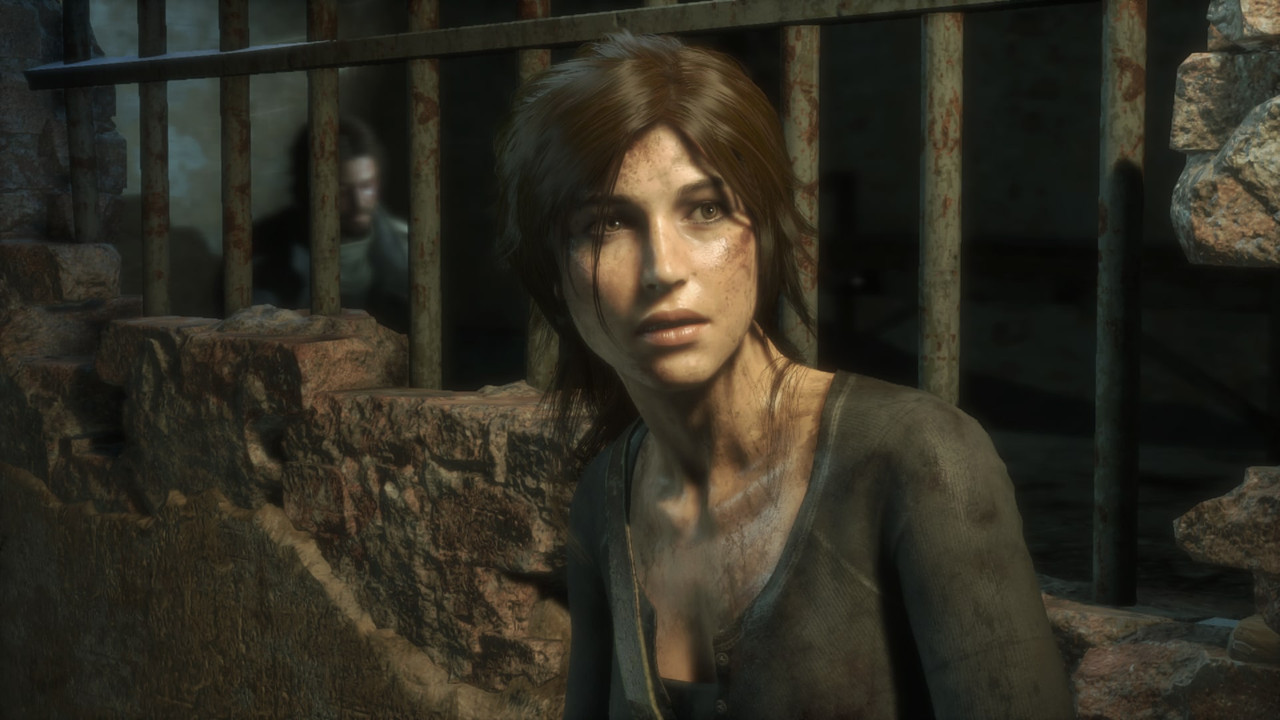

Rise Of The Tomb Raider DirectX 11 & 12 Benchmarks

DirectX 12 is still in the early stages and I’ve decided to pick up Rise of the Tomb Raider for the newest API. Not only for the DX12 API, but I’m a Tomb Raider fanatic. I can still remember when I used to play the Tomb Raider games back in the 90s\2000s. For the record I own every Tomb Raider game released across several platforms, so I’m serious when I say that I’m a TR fan. I’m still excited about DX12 nonetheless and I’ve ran tons of DX11 & DX12 benchmarking test. I’ve also performed some frame time tests as well. Unfortunately, we still haven’t received a “full” DirectX 12 title yet. So far we have Gear of War: UE, Hitman and now Rise of the Tomb Raider. Hitman did a great job with DX12, but there are still a few issues that need to be ironed out by the developers, Nvidia and AMD. Gear of War: UE, Hitman and TR were built with more than one API in mind. That’s expected since there are still a lot of gamers out there with DX11 only GPUs, but a lot of older GPUs can run some DirectX 12.

I’m sure once the developers start focusing solely on either Vulkan or DirectX 12 things will get much better. Well take what they give us for now. You can check out my Hitman review here:

Now let’s get to the specs and benchmarks.

Gaming Rig Specs:

Motherboard:ASUS Sabertooth X58

CPU:Xeon X5660 @ 4.6Ghz

or

CPU:Xeon X5660 @ 4.8Ghz

CPU Cooler:Antec Kuhler 620 Watercooled - Pull

GPU:AMD Radeon R9 Fury X Watercooled - Push

RAM:24GB DDR3-1600Mhz [6x4GB]

or

RAM:24GB DDR3-2088Mhz [6x4GB]

SSD:Kingston Predator 600MB/s Write – 1400MB/s Read

PSU:EVGA SuperNOVA G2 1300W 80+ GOLD

Monitor:Dual 24inch 3D Ready – Resolution - 1080p, 1400p, 1600p, 4K [3840x2160] and higher.

OS:Windows 10 Pro 64-bit

GPU Drivers:

AMD R9 Fury X @ StockSettings – Core 1050Mhz

All benchmarks are using SMAA and AA will be the built-in AO unless noted otherwise.

First I’d like to start with the fact that this is an Nvidia “The Way It’s Meant To Be Played” title. From here out I’ll use the label “TWIMTBP”. With that being said the game does feature some AMD tech shockingly. TR uses AMDs “Pure Hair” technology. Pure Hair is TressFX big sister and it looks even better. TressFX was featured in Tomb Raider 2013 release. AMD tech is open sourced. The Nvidia tech available is HBAO+, which is an upgrade from the 2008 HBAO version, and most recently with the DX12 update is a VXAO [Voxel-Based Ambient Occlusion]. Both HBAO+ and VXAO are closed sourced behind Nvidia’s proprietary Gameworks. What’s weird about VXAO is that it’s only compatible with the DX11 API. Nvidia has been a little slow updating their tech to DX12. What makes the VXAO setting different is that it’s only available on Nvidia GPUs so AMD users can’t find the setting in the option, secondly, VXAO is optimized and only available on Maxwell GPUs. Ouch Nvidia pushes that knife deeper into Kepler 600\700 series GPUs.

I also have few things I have to get off my chest just for the sake of being “fair”. I believe in fair treatment so I normally mix up the settings depending on the situation. However, lately I’ve been seeing a trend with a lot of benchmarks across the web. Everyone knows that Pure Hair is AMD tech and HBAO+ is Nvidia tech. If you didn’t read what I’ve written above know now you know. So you would think that the journalist would give gamers different benchmark results with certain tech [HBAO+\Pure Hair] disabled. NOPE! It seems many of them just set the Graphics settings to “Very High” and call it a day. There’s two issues with this; the first being that Pure Hair is optimized for AMD GPUs; the obviously second being that HBAO+ is closed sourced optimized for Nvidia GPUs.

So with those two facts out of the way you would “really” think that the journalist would give the gamers more options than the standard “Very High” graphical setting. The “Very High” doesn’t max the game 100% so it’s just a preset. This did not happen and obviously it gave AMD a bad look initially. What’s really messed up about the situation is that the journalist will acknowledge the HBAO+ hit on AMD GPUs in the the comment section or in the forums, but will not make a chart in the actual review; showing that AMD performance increases are higher than Nvidia’s increases when HBAO+ is disabled. AMD optimized their drivers, but it doesn’t matter if no one updates their benchmarks results or test more than one setting.

One company can easily optimize their drivers [Nvidia] and the other company [AMD] cannot optimize their drivers for closed proprietary tech [Nivida Gameworks]. So obviously AMD losing both battles here. Nvidia easily has access to AMDs open-source Pure Hair. Unlike the TressFX issues in 2013, Nivida was prepared this time. Well AMD has been optimizing their drivers and working on TR performance with the latest Crimson 16.3 Beta Drivers. AMD has really been cranking out drivers rapidly over the past year or so.

So initially I was going to 100% max the game as I normally do and benchmark. Instead I think I’ll do something different this time. I think I’ll use the SAME settings several websites used by simply setting the “Graphical Settings” to “Very High”. Then afterwards I’ll disabled HBAO+ to show you the penalty it has on AMD GPUs. Since I only have an AMD GPU to benchmark I’ll disabled the Nvidia tech. If I had an Nvidia GPU I would disable the AMD tech for comparison. Then I would run both cards with neither company tech enabled for a fair comparison.

- Prev

- Next >>